You can't do science without measurement. That blunt fact might give pause when people emphasize non-cognitive factors in student success and in efforts to boost student success.

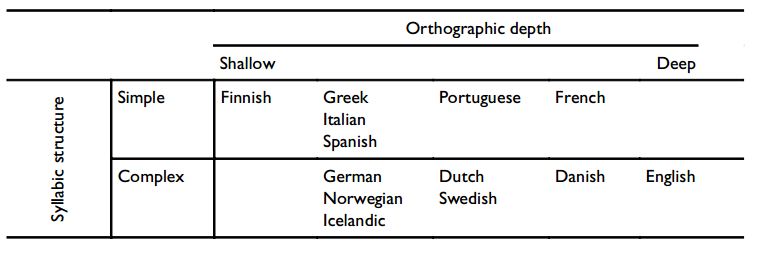

"Non-cognitive factors" is a misleading but entrenched catch-all term for factors such as motivation, grit, self-regulation, social skills. . . in short, mental constructs that we think contribute to student success, but that don't contribute directly to the sorts of academic outcomes we measure, in the way that, say, vocabulary or working memory do.

There is a problem, there's little doubt. A term like "self-regulation" is used in different senses: the ability to maintain attention in the face of distraction, the inhibition of learned or automatic responses, or the quelching of emotional responses. The relation among them is not clear.

Further, these might be measured by self-ratings, teacher ratings, or various behavioral tasks.

So the measurement problem in non-cognitive factors shouldn't be overstated. We're not at ground-zero on the problem. At the same time, we're far from agreed-upon measures. Just how big a problem is that?

It depends on what you want to do.

If you want to do science, it's not a problem at all. It's the normal situation. That may seem odd: how can we study self-regulation if we don't have a clear idea of what it is? Crisp definitions of constructs and taxonomies of how they relate are not prerequisites for doing science. They are the outcome of doing science. We fumble along with provisional definitions and refine them as we go along.

The problem of measurement seems more troubling for education interventions.

Suppose I'm trying to improve student achievement by increasing students' resilience in the face of failure. My intervention is to have preschool teachers model a resilient attitude toward failure and to talk about failure as a learning experience. Don't I need to be able to measure student resilience in order to evaluate whether my intervention works?

Ideally, yes, but that lack may not be an experimental deal-breaker.

My real interest is student outcomes like grades, attendance, dropout, completion of assignments, class participation and so on. There is no reason not to measure these as my outcome variables. The disadvantage is that there are surely many factors that contribute to each outcome, not just resilience. So there will be more noise in my outcome measure and consequently I'll be more likely to conclude that my intervention does nothing when in fact it's helping.

The advantage is that I'm measuring the outcome I actually care about. Indeed, there would not be much point in crowing about my ability to improve my psychometrically sound measure of resilience if such improvement meant nothing to education.

There is a history of this approach in education. It was certainly possible to develop and test reading instruction programs before we understood and could measure important aspects of reading such as phonemic awareness.

In fact, our understanding of pre-literacy skills has been shaped not only by basic research, but by the success and failure of preschool interventions. The relationship between basic science and practical applications runs both ways.

So although the measurement problem is a troubling obstacle, it's neither atypical nor final.

References

Duckworth, A. L., & Quinn, P. D. (2009). Development and validation of the Short Grit Scale (GRIT–S). Journal of Personality Assessment, 91, 166-174.

Sitzmann, T, & Ely, K. (2011). A meta-analysis of self-regulated learning in work-related training and educational attainment: What we know and where we need to go. Psychological Bulletin, 137, 421-442.

RSS Feed

RSS Feed