|

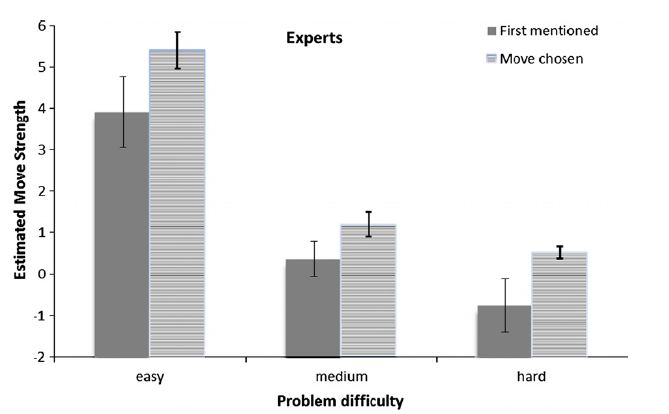

I made another of my garage-band quality videos, this one on the relationship of science and education, titled "Is Education an Art or a Science?" Much has been written in the last ten years about intuition, especially expert intuition. What’s so fascinating about intuition, of course, is the idea that one’s mind may work on a problem without one being aware of it. Keith Richards put it this way: Somewhere in the back of your mind, you’re thinking about this chord sequence or something related to a song. No matter what the hell’s going on. You might be getting shot at, and you’ll still be ‘Oh! That’s the bridge!’ And there’s nothing you can do; you don’t realize its happening. It’s totally subconscious, unconscious, or whatever. Malcolm Gladwell’s book Blink was largely devoted to this phenomenon. Other books--e.g., Tim Wilson’s Strangers to Ourselves and Danny Kahneman’s Thinking Fast and Slow--have summarized some of the research showing that such unconscious cognition occurs, but Gladwell differed in suggesting that at times we’d be better off relying on intuition than in thinking. Some researchers--most consistently Gerd Gigerenzer at the Max Planck institute, but others, including Kahneman at times--suggest that advice might be sound. A recent study, however, suggests you’re better off thinking. A group of researchers at Florida State and University of Leuven (Moxley et al, 2012) presented expert chess players with complex chess positions and varied the amount of time players were allowed to deliberate before they had to pick a move. The question was whether players benefited from more time. Experimenters also asked subjects to “think aloud” as they deliberated, so researchers could evaluate whether the first move subjects contemplated turned out to be the best one, even if further thought led them to pick another, inferior move. (Move strength was evaluated by a computer program designed to make such evaluations--I won’t pretend to be able to evaluate its validity.) Here are the results, for three different levels of problem difficulty. This pattern--improved moves with more deliberation--was also observed in less expert players, but that finding is not terribly surprising. Expertise is thought to be the basis of intuition, so we expect that rapid intuitions of less expert players will not be as good, and that they will benefit from slower, deliberative processes.

More surprising is that experts showed the same benefit. Other studies (e.g., Burns, 2004) using a different methodology drew a different conclusion. For example, when playing speeded chess (which allows very little time for each move) the differences between good, very good, and expert players remains largely intact. So whatever it is that makes the best players the best, it can't be slow, deliberative processes, because there's no time for these processes to operate in speeded chess. It's been thought that the rapid, intuitive processes are due to pattern recognition of game positions. Moxley et. al argue that the difference between their results and previous ones may lie in the fact that they examined move selection whereas Burns (and other researchers) have examined the outcome of entire games. In the final analysis, the most apt conclusion seems to be that both pattern recognition and deliberative cognition are major contributors to expertise. Burns, B. D. (2004). The effects of speed on skilled chess performance. Psychological Science, 15, 442–447. Moxley, J. H., Ericsson, K. A., Charness, N., & Krampe, R. T. (2012). The role of intuition and deliberative thinking in experts' superior tactical decision-making. Cognition, 124, 72-78. Several people have sent this post from the New York Times web site to me. The author is a Professor of Philosophy at Notre Dame named Gary Gutting, and he argues that data from the social sciences are less useful than many people seem to believe.

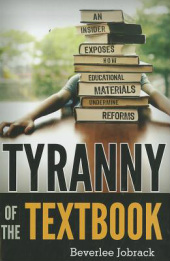

Here is the nub of his argument: Point 1: The author points out that some conclusions have more data behind them than others: initially, Higgs suggested something like the boson might exist. Today, there are many more data consistent with the boson and its suggested characteristics. Point 2: The best supported conclusions of the core natural sciences (physics, chemistry, biology) are well established, but the best developed social science (he cites economics as an example) “have nothing like this status” in terms of the reliability of their findings. Point 3: “While many of the physical sciences produce many detailed and precise predictions, the social sciences do not. The reason is that such predictions almost always require randomized controlled experiments which are seldom possible when people are involved.” Point 4: He closes by suggesting that results from social sciences not be ignored, but that we must recognize their limitations. “At best, they can supplement the general knowledge, practical experience, good sense and critical intelligence that we can only hope our political leaders will have.” There are several significant problems here. Point 1: Gutting is right. Better supported conclusions are more reliable. Point 2: Gutting argues that findings from social sciences are less reliable than those of natural sciences, and so should not be trusted. First, if our goal is to use science to inform public policy (his stated goal) then the question is whether social science findings add value compared to not having the findings in hand. It doesn’t really matter if they are as good as some other science. Second, on the point of reliability, Gutting is wrong. Some findings in social sciences are known with a high degree of reliability, e.g., the consequences of schedules of practice on memory. On this point and point 3, Gutting paints with an extraordinarily broad brush, seeking to characterize the nature of “social sciences” in general, and to contrast them with all of the “natural sciences.” Point 3: Regarding the use of random control designs, Gutting is again in error. Most of the experiments I have done in my career have been laboratory studies using random control designs. Readers of this blog are of course aware that many studies in education use this design. Gutting also fails to note that some areas of biology (e.g., evolutionary biology) and of physics (e.g., astrophysics) make extensive use of data that are not produced by randomized control trials. Point 4: Inviting data from social sciences to “supplement” good old common sense is tantamount to inviting people to ignore the data, or more likely to interpret the data as consistent with their beliefs, a phenomenon described by social scientists. I am suspicious that this post was written as part of an experiment by nefarious social scientists to see how readers of the Times would react. In Tyranny of the Textbook Beverlee Jobrack offers many observations that you’ve heard before. Standards alone won’t improve achievement. Testing alone won’t improve achievement. Technology alone won’t improve achievement. What makes the book worth reading is not Jobrack’s thoughts on these topics, because they are, frankly, fairly ordinary. But her thoughts on the textbook industry make the book well worth your time. The kernel of her argument has three pieces:

(1) Textbook development: Textbooks are developed based on tradition and based on competitors’ products. No one in the publishing industry worries about whether the materials are effective. As Jobrack notes, publishers are for-profit enterprises. They need decision-makers to adopt their textbooks. Decision-makers do not base adoptions on effectiveness—or at least, publishers believe that they do not. (2) Textbook adoption: What factors drive adoptions? To the extent that teachers have any input, it will be teacher leaders, and they already teach well. They have an existing set of lesson plans that work well. So they are not interested in a textbook that would necessitate rewriting all of those lesson plans. So new textbooks tend to be conservative. Further, just three publishing companies account for 75% of the market. So most of the books look the same. Consequently, relatively trivial features have an outsize influence on adoption decisions. Trivial features like the cover design. Like the font size. Like whether the important features are clearly labeled or a bit more difficult to find. Content matters to adoptions, according to Jobrack, only insofar as the publishers ensure that all of the state standards are “covered.” But she goes on to point out that there is little or no attention paid to ordering and presenting this content in a way to ensure that students learn. Again, effectiveness of learning is simply not on the publishers radar screen. (3) Why textbooks matter: Jobrack argues that textbooks are hugely important because they constitute a de facto curriculum. Beginning teachers are overwhelmed by the prospect of writing lesson plans, and so depend heavily instructional materials provided by publishers. Is Jobrack right about all this? She ought to know whereof she speaks. She was promoted through the editorial ranks until she was the editorial director of SRA/McGraw-Hill. Still, we should bear in mind that these are mostly Jobrack’s impressions, not a systematic study of publishing business practices. I admit that I’m probably more ready to believe Jobrack on publishing because her description so often matches my own experience. Like the beginning teachers she describes, when I first started teaching cognitive psychology, I relied heavily on published materials. I laid out four textbooks on my desk, used the sequence of topics they all shared, and cobbled together lectures by stealing the best stuff from each. I saw the conservatism Jobrack describes much later when I prepared to write my cognitive textbook and told my editor that I wanted to do something really different than what was currently on the market. Her response: “Okay, but don’t make it more than about 20% different or you’ll never get any adoptions.” A point Jobrack makes indirectly but strikes me as more important than she realizes is the role of measurement. Jobrack notes that publishers would be motivated to make textbooks effective if that drove the market. Well, in order to know whether they are effective, we—teachers, administrators, parents, researchers, policymakers—need to agree on what we mean by effective and on a way to measure it. The textbook problem brings fresh urgency to this issue. Whether Jobrack is right or not, I hope this book will prompt greater discussion about textbooks, and greater scrutiny of adoption processes. You may remember The Bell Curve. The book was published in 1994 by Richard Herrnstein and Charles Murray, and it argued that IQ is largely determined by genetics and little by the environment. It further argued that racial differences in IQ tests scores were likely due to genetic differences among the races.

A media firestorm ensued, with most of the commentary issuing from people without the statistical and methodological background to address the core claims of the book. The American Psychological Association created a panel of eminent researchers to write a summary of what was known about intelligence, which would presumably contradict many of these claims. The panel published the article in 1996, a thoughtful rebuttal of many of the inaccurate claims in The Bell Curve, but also a very useful summary of what some of the best researchers in the field could agree on when it came to intelligence. Now there's an update. A group of eminent scientists thought the time was ripe to provide the field with another status-of-the-field statement. They argue that there have been three big changes in the 15 years since the last report: (1) we know much more about the biology underlying intelligence; (2) we have a much better understanding of the impact of the environment on intelligence, and that impact is larger than was suspected; (3) we have a better understanding of how genes and the environment interact. Some of the broad conclusions are listed below (please note that these are close paraphrases of the article's abstract).

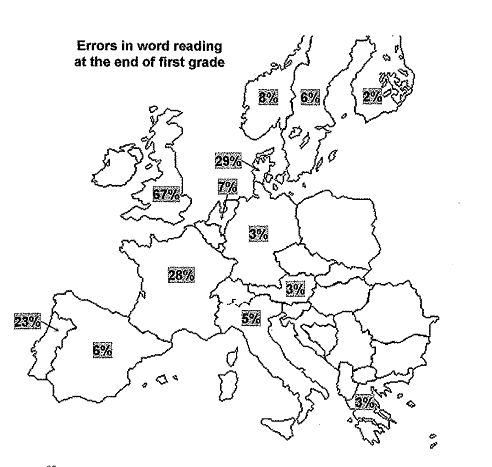

Neisser, U.; Boodoo, G.; Bouchard, T. J. , J.; Boykin, A. W.; Brody, N.; Ceci, S. J.; Halpern, D. F.; Loehlin, J. C. et al (1996). Intelligence: Knowns and Unknowns. American Psychologist, 51: 77. Nisbett, R. E., Aronson, J., Blair, C., Dickens, W., Flynn, J., Halpern, D. F., & Turkheimer, E. (2012, January 2). Intelligence: New Findings and Theoretical Developments. American Psychologist, 67, 130-159. One finding (from Seymour, Aro & Erskine, 2003) illustrated in one figure (Figure 5.3 from Stan Dehaene's marvelous book,Reading in the Brain.). The figure shows errors in word reading at the end of first grade, by country. Are we to conclude that the differences are due to educational practice? The vaunted Finnish system shows smashing results even at this early age, whereas the degenerate British system can't get it right?

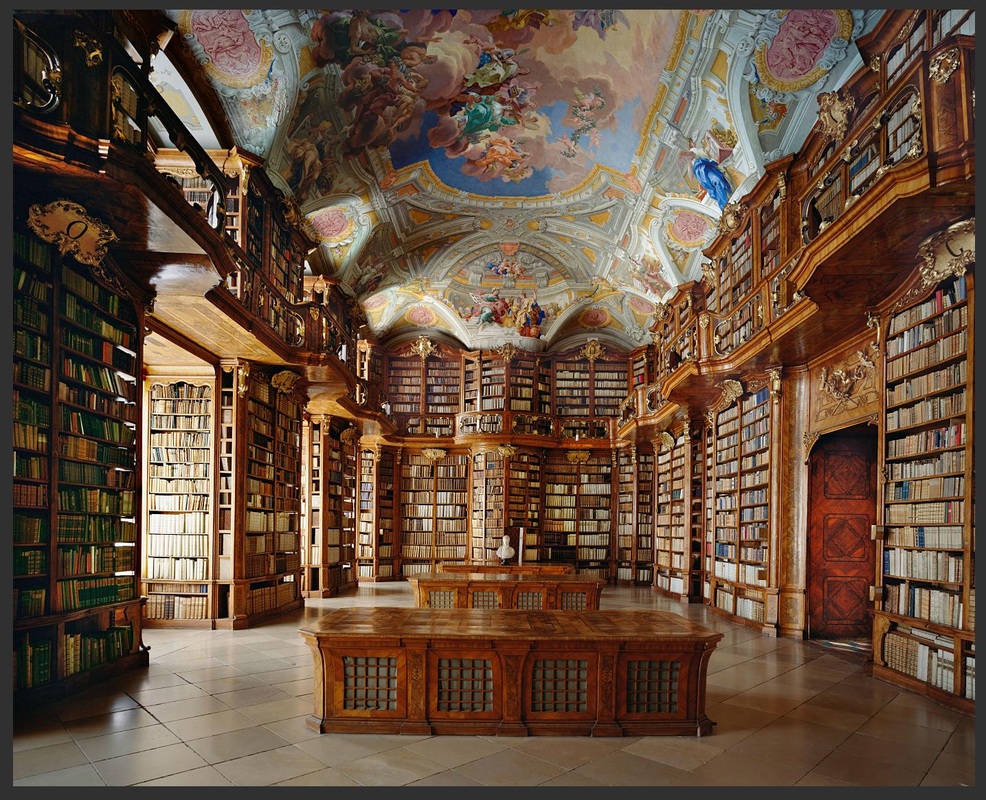

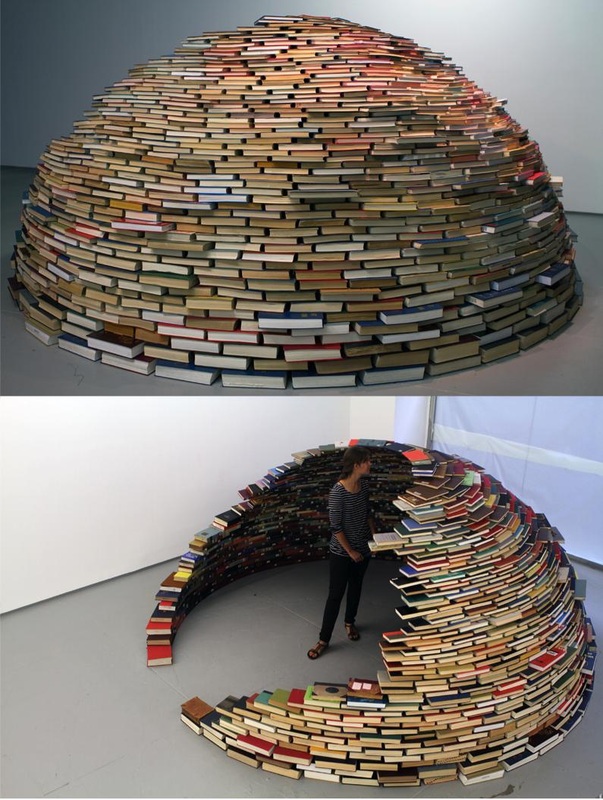

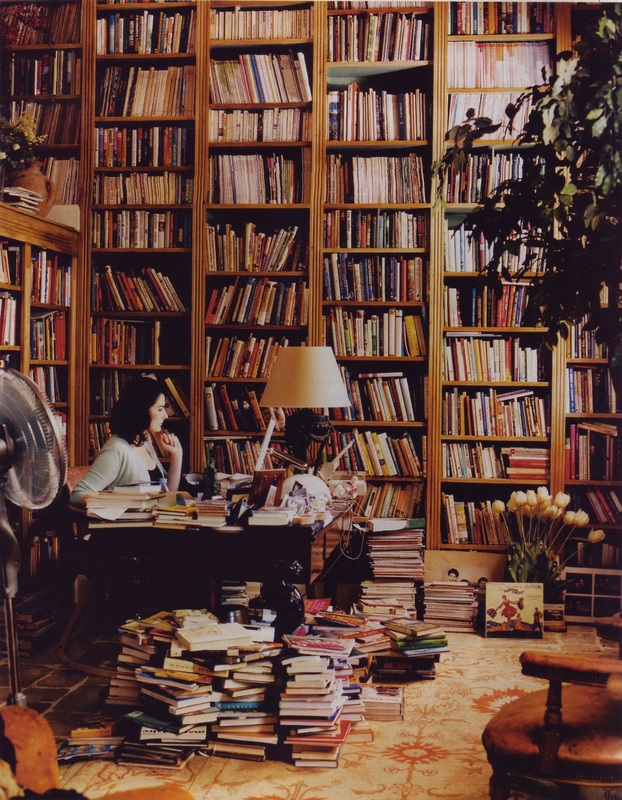

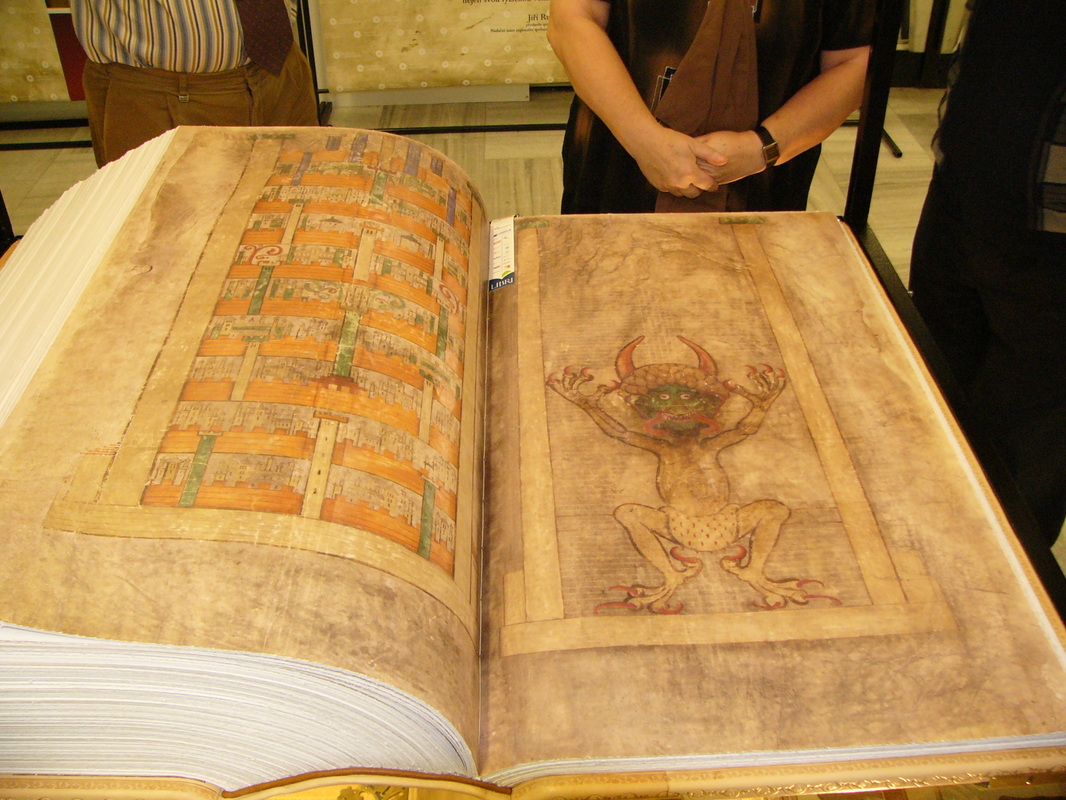

Countrywide differences in instruction could play a role, but Dehaene emphasize that the countries in which children make a lot of errors--Portugal, France, Denmark, and especially Britain--just happen to have deeper orthographies. A shallow orthography means that there is a straightforward correspondence between letters and phonemes. English, in contrast, has one of the deepest (most complex) orthographies among the alphabetic languages: for example, the letter combination "gh" if pronounced differently in in "ghost," "eight," and "enough." In short, children learning to read English have a difficult task in front of them--and so too, therefore, do teachers. Is there a lesson to be drawn here? To me, the difficult orthography of English highlights the importance of careful sequencing in the learning of grapheme-phoneme pairs, along with a limited number of sight words--sequencing that exploits the regularities that exist, and bring children as swiftly as possible to the point that they can read texts and so feel a sense of accomplishment. In Italy, for example, the order in which grapheme-phoneme pairs are taught would matter much less because there simply is not that much to learn. Several months of instruction is sufficient for most children to reach a point that they can decode most texts. The deep orthography of English also sheds light on why American schools spends as much time on English-language arts (ELA) as they do: something like two-third of instructional time in the first grade (NICHD Early Child Care Research Network, 2002). One might draw the conclusion that the difficulty of the task in reading requires enormous amounts of time. Another point of view--one I share--is that this practice places too much emphasis on ELA at the expense of other content, and runs a high risk of discouraging kids who might become passionate about science, or history, or geography, but won't because the early elementary years contain so little content beyond ELA and mathematics. I think it would worth our accepting slower progress in reading in exchange for broader subject-matter coverage in early grades--coverage that will actually pay dividends for reading comprehension in later grades. Dehaene, S. (2009). Reading in the Brain. New York: Viking. National Institute of Child Health and Human Development Early Child Care Research Network (2002). The Relation of Global First-Grade Classroom Environment to Structural Classroom Features and Teacher and Student Behaviors. The Elementary School Journal, 102, 367-387 Seymour, P. H. K., maro, M., & Erskine, J. M. (2003). Foundation literacy acquisition in European orthographies. British Journal of Psychology, 94, 143-174. Most educators are avid readers. Most avid readers love books not only for the purpose of reading them, but as physical objects. There is a marvelous section of the website Reddit called BookPorn, in which users submit beautiful and interesting pictures of books: libraries, book stores, unusual book shelves, individual volumes of interest, and the occasional celebrity. Here are a few samples. (Click for larger versions.)  Library, Trinity College, Dublin |

PurposeThe goal of this blog is to provide pointers to scientific findings that are applicable to education that I think ought to receive more attention. Archives

January 2024

Categories

All

|

RSS Feed

RSS Feed