Further, we suggested that all-purpose comprehension processes (e.g., monitoring whether you’re understanding, remembering to coordinate meaning across sentences and paragraphs) makes a contribution, but is not much susceptible to practice. As evidence, we cited eight meta-analyses that examined data from studies of comprehension strategy instruction. All of these analyses showed a sizable benefit for strategy instruction, but the amount of instruction or practice had no impact on the benefit. Our interpretation was the strategy instruction told students (who didn’t already know it) that things like coordinating meaning was a good thing to do, but such instruction can’t tell you how to do it, because the how depends on the particular meaning. The instruction can’t be all-purpose.

A new meta-analysis shows the same pattern of data.

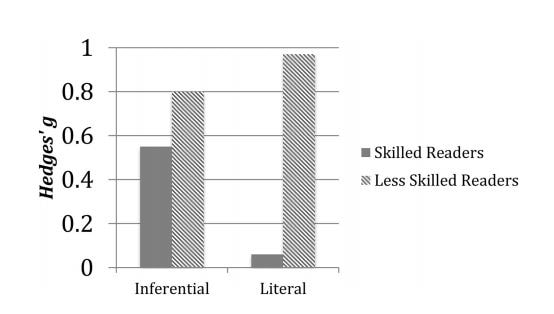

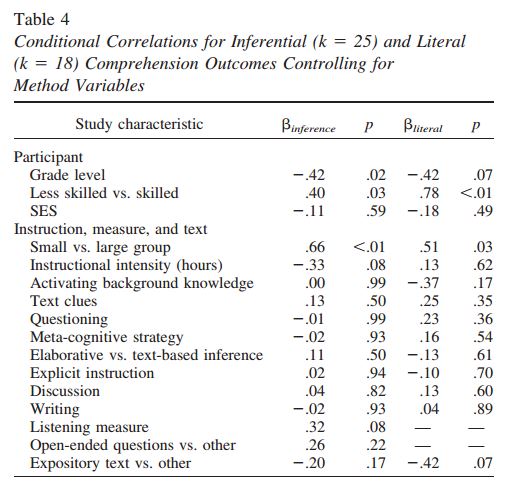

Amy Elleman summarized data in a meta-analyis of 25 studies that used various methods to teach children to make inferences, and to apply them to texts. She examined three separate measures of comprehension: general comprehension, inferences in particular, and understanding of things literally stated in the text. She also separated the benefit of instruction to skilled and less-skilled readers.

The data showed “moderate to large” effects of instruction to general comprehension and to making inferences for both skilled and less skilled readers. The pattern differed for the “literal” measure, however, with skilled readers showing almost no gain but unskilled readers again showing a sizable gain.

Especially noteworthy to me was that Elleman observed no effect of what she called “Instruction intensity” i.e., number of hours devoted to inference instruction, as Lovette and I noted for the other eight meta-analyses.

As Willingham & Lovette suggested, comprehension instruction is a great idea, because research consistently shows a large benefit of such instruction. But just as consistently, it shows that brief instruction leads to the same outcome as longer instruction.

RSS Feed

RSS Feed